Life is a Factory

Let’s be honest – Karl Marx was a bit long-winded in his efforts to explain to every-day people how capitalism organized life around the factory system. Marx was also writing in the middle of the 1800s, he had boils on his face, and he looked like Santa Clause. What could he have possibly known then that would apply to our present day? As it turns out – quite a lot!

Fast forward to the future. Firms like Google, Facebook, Amazon, Netflix, and Twitter have pushed the boundaries of capitalism into new territory. The factory is everywhere, resulting in what Shoshana Zuboff calls “surveillance capitalism” (see her article attached below). Everything we do can be reduced to a data point that can, in turn, be sold and repurposed for profit. The tools of the trade – cell phones, laptops, and cameras, are in the process of changing our relationship to each other as well as our relationship to everything around us. We are the human resources of these new industries; extraction by distraction, the manipulation of affect, and the dispossession of our data are what makes us so valuable. Collectively, our thoughts, feelings, “likes,” and dreams are being monetized for profit. This behavioral data represents a boundless form of wealth accumulation, the limits of which are unthinkable. Every domain of social life is a potential target.

What we have here, in other words, is a new economic model. One whose goal, according to Zuboff, is “the harvest of behavioral surplus from people, bodies, things, processes, and places in both the virtual and the real world,’ so that this can be transformed into profits and power. Crucial to these efforts are ubiquitous surveillance systems built on computer systems infrastructures – digital platforms – which provide for the mass siphoning of public information.

Capitalism, as Marx was fond of arguing, is constantly changing. There are always new Modes of Production. Consequently, as Zuboff argues, we see that were once profits flowed from goods and services, this was eventually replaced by financial speculation. Today, surveillance and the monetization of mass behavioral data are fueling the economy. As a result, the predictive sciences – even prediction itself – has become the product, as companies compete for and sell our attention with the hope that they might alter our behavior.

Facebook Is Not Your Friend

Surveillance capitalism is not a conspiracy theory. To make sense of it, we might recall the eighteenth-century philosopher and social reformer, Jeremy Bentham, who designed the model panopticon as a prison to serve as an effective means of “obtaining the power of mind over mind, in a quantity hitherto without example.”

The Panopticon was envisioned as a type of institutional building. Bentham’s design concept idealized a single watchtower, whose watchman might observe (opticon) all (pan) occupants of the facility without them ever being able to discern whether or not they were being watched. This was, ideally, a circular structure with an “inspection house” where the management of the institution, stationed on a viewing platform, could watch people (prisoners, workers, children…students). Bentham conceived his basic plan as one that was equally applicable to hospitals, schools, sanatoriums, daycares, and asylums, but he devoted most of his efforts to developing a design for a Panopticon prison, and it is this prison that we most identify with the use of the term. In Bentham’s panopticon, prisoners are a form of menial labor. Not much has changed more than 150 years later.

The French philosopher Michel Foucault would later in his work, Discipline & Punish, point to the Panopticon as a metaphor to describe how disciplinary power functions in society. The key here, which Foucault distinguishes, is that people at some point learn to internalize the watchful gaze of the watchers. “Compulsive visibility” is a price we pay to live in modern society. This is what keeps everyone in line and maintains individuals as disciplined bodies and subjects. Think about this next time someone tells you “if you haven’t done anything wrong, you don’t have anything to hide,” as this is but one example of how people have come to internalize the panopticon to such an extent they can no longer see they have been overcome by the logic of the system.

Now that you can distinguish this, if you look hard enough, you will find the panopticon is everywhere. But the panopticon has evolved. Domination is no longer physical and doesn’t have to be achieved through confinement-based observation; it operates in ways that are more diffuse, where the target of the watchers gaze actually participates in the terms of their own domination.

For example, think about Facebook and the ascendance of the “like” clicks as a way to control human behavior. Your compulsion to click on a digital object here derives not from a physical and external power exerted on your body, but rather through your manufactured consent. Your participation in socially medicated digital surveillance produces data so that you might, in turn, be controlled by a system that manipulates your emotions and desires to “share” with others.

Alternatively, we might apply the concept to an understanding of how our contemporary government might function as a police state, where round-the-clock surveillance, authoritarianism, totalitarianism, and militarization work together to functionally weaponize technology. The end result is relentless mass marketing and groupthink, all of which have become pervasive in society to such an extent that people have come to passively accept their lot in life as a inmates/prisoners of the system – a system of their own making.

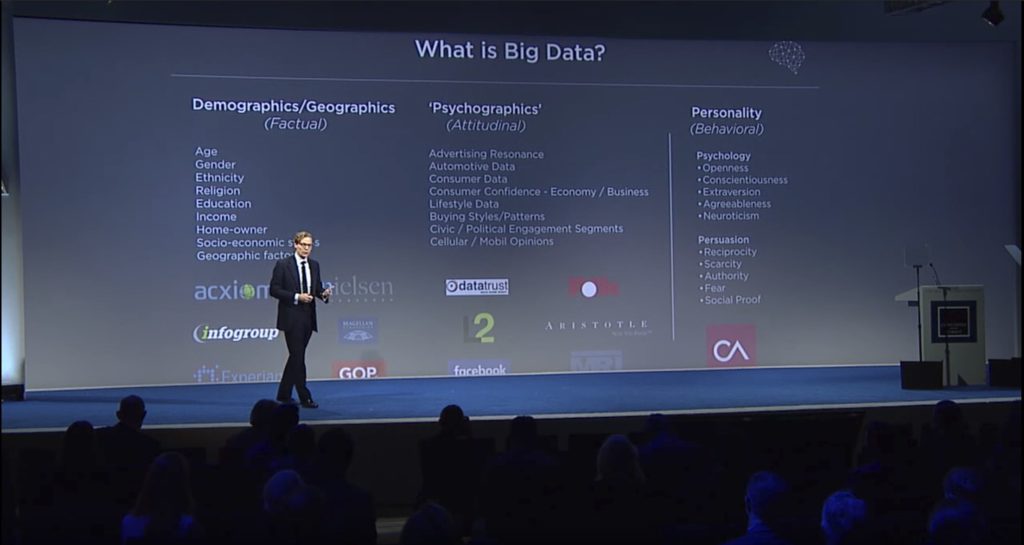

Facebook collects a lot of data from people and admits it. The recent Facebook/Cambridge Analytica revelations offer proof of this. People are collecting your data, storing it, and selling it every day in unforeseen ways. But people are also being recruited to inform on each other. Think about it: teachers are being turned into prison guards; students are monitoring and reporting on teachers with smartphones. People are being publically evaluated all the time (Yelp, Uber, Airbnb). Fill out this survey and let me know how I did! Our devices are reporting our vital personal information even when we think they are not, as often this occurs with/without our knowledge and understanding. And no one seems to care, so long as they can watch cat, puppy, and goat videos on their phone.

A smartphone user shows the Facebook application on his phone in this photo illustration. Facebook Inc’s mobile advertising revenue growth has continued to explode over the years, though it recently posted enormous losses in the wake of the Cambridge Analytica disclosures (REUTERS/Dado Ruvic).

Apart from the obvious dangers posed by a government that feels both justified and empowered to spy on its people, using technology to monitor and control them, we may be approaching a time where we will be forced to choose between obeying the demands of government—i.e., the law, or whatever a government official deems the law to be—and maintaining our individuality, integrity, and independence.

These developments, furthermore, have enormous implications for social inequality to the extent that the new economic model/surveillance state is not being run for the benefit of all its citizens to pursue life, liberty, and happiness. Rather, it is aimed at serving the profit motives of people who control the technology to benefit of those with wealth and power.

We Are All Prisoners. Everything is Jail

As was stated above, Foucault theorized the Panopticon as a “mechanism of power reduced to its ideal form” According to Foucault, the panopticon automatizes & disindividualizes power. Consequently, it doesn’t matter who exercises the power. Power produces homogeneous effects in populations to the extent that it creates a cruel but ingenious cage. At the same time, it creates populations of people who develop an affinity for their imposed as well as self-made prisons and the information ties that bind them.

Americans, in particular, are prone to boast and claim emphatically that they are “free.” They like to point to their guns and the second amendment as the ultimate guarantors of their freedom. But to what extent are you really free if your every movement can be monitored, uploaded, stored, and recalled for any reason?

Here are some additional questions to ponder:

How have you become accustomed to you social “chains” (in whatever form that takes)?

How have you allowed your comfort and your acquired false sense of security to render you powerless to resist?

How does technology, absent the physical coercion of interrogation tactics, torture, and hallucinogenic drugs, perhaps engage in softer forms of mind control, identity theft, dream manipulation, and other forms of social conditioning and indoctrination, “persuade” us all to comply and subjugate ourselves to the will of the powers-that-be?

How does one maintain their freedom in a society where prison walls are disguised within the trappings of technological and scientific progress, national security and so-called democracy?

Does standing for the national anthem at a sporting event, “thanking” soldiers for their service, suggest that a citizenry and a people are free? Or do these things merely offer the comforting illusion of freedom, all while functioning like a prison, where people have essentially become inmates of a system that controls, monitors, and disciplines them?

Police Panopticon

The American police state in many ways functions like a metaphorical panopticon. That is, American society is a circular prison, where “inmates” are monitored by virtual watchman situated in a central tower. Because the inmates cannot see the watchman, they are unable to tell whether or not they are being watched at any given time and must proceed under the assumption that they are always being watched.

As a case in point, the New York City Police Department has the largest police budget in the United States. In light of this, they have one of the largest budgets to conduct surveillance operations on citizens. After the 9/11 attacks, the NYPD purchased and deployed a fleet of mobile surveillance towers to monitor what were deemed to be “hot spots” – in high crime areas – throughout the city. The Mobile Utility Surveillance Towers (M.U.S.T) are self-contained, mobile units, that have a surveillance platform that extends from a conversion van. These vans have been used to monitor NATO summit protests in places like Seattle and Chicago and they are sometimes found on the Texas-Mexico border, but their deployment in New York marks the first time they’ve been employed by the NYPD.

NYC residents are less than enthusiastic about the towers and beefed-up security presence. East Village residents criticized the deployment of the M.U.S.T. units and demanded that the NYPD get rid of the ones they erected in Tompkins Square Park. Instead of round-the-clock surveillance, they want foot patrols, where “officer friendly” walks a beat — not Big Brother spying from a surveillance tower.

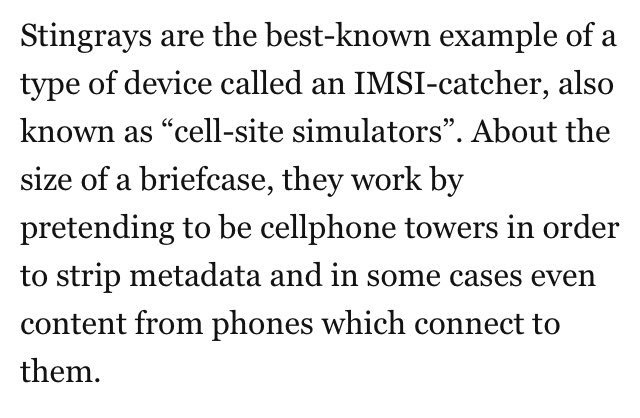

In addition to the towers, the NYPD is making use of what is referred to as “Stingray” technology, which enables them to track citizens’ cell phones without warrants. Since 2008, it was estimated that the NYPD tracked cellphones over 1,000 times, according to public records obtained by the New York Civil Liberties Union. As of now, they do not have a policy guiding how police can use the controversial devices (McCarthy).

The way the stingray devices work is that they mimic cell tower signals and track a cell phone’s location at a specific time. Law enforcement agencies can use the technology to track people’s movements through their cell phone use. Stingrays can also detect the phone numbers that a person has been communicating with, according to the NYCLU. The devices allow law enforcement to bypass cell phone carriers, who have provided information to police in the past. Moreover, they can track data about bystanders in close proximity to their intended targets (McCarthy).

Mariko Hirose, the NYCLU attorney who filed the records request, said the records reveal knowledge about NYPD’s stingray use that should have been divulged before police decided to start using them. “When local police agencies acquire powerful surveillance technologies like stingrays the communities should get basic information about what kind of power those technologies give to local law enforcement” (McCarthy).

The Secrets of Surveillance Capitalism

Re-blog article by Shoshana Zuboff, “The Secrets of Surveillance Capitalism.”

You have probably noticed it already. There is a strange logic at the heart of the modern tech industry. The goal of many new tech startups is not to produce products or services for which consumers are willing to pay. Instead, the goal is to create a digital platform or hub that will capture information from as many users as possible — to grab as many ‘eyeballs’ as you can. This information can then be analyzed, repackaged and monetized in various ways.

Recently, Google surpassed Apple as the world’s most highly valued company in January for the first time since 2010 (back then each company was worth less than 200 billion – now each is valued at well over 500 billion.) While Google’s lead lasted only a few days, the company’s success has implications for everyone who lives within the reach of the Internet. Why? Because Google is ground zero for a wholly new subspecies of capitalism in which profits derive from the unilateral surveillance and modification of human behavior. This is a new surveillance capitalism that is unimaginable outside the inscrutable high-velocity circuits of Google’s digital universe, whose signature feature is the Internet and its successors.

While the world is riveted by the showdown between Apple and the FBI, the real truth is that the surveillance capabilities being developed by surveillance capitalists are the envy of every state security agency. What are the secrets of this new capitalism, how do they produce such staggering wealth, and how can we protect ourselves from its invasive power?

“Most Americans realize that there are two groups of people who are monitored regularly as they move about the country. The first group is monitored involuntarily by a court order requiring that a tracking device be attached to their ankle. The second group includes everyone else…”

Some will think that this statement is certainly true. Others will worry that it could become true. Perhaps some think it’s ridiculous. It’s not a quote from a dystopian novel, a Silicon Valley executive, or even an NSA official. These are the words of an auto insurance industry consultant intended as a defense of “automotive telematics” and the astonishingly intrusive surveillance capabilities of the allegedly benign systems that are already in use or under development. It’s an industry that has been notoriously exploitative toward customers and has had obvious cause to be anxious about the implications of self-driving cars for its business model. Now, data about where we are, where we’re going, how we’re feeling, what we’re saying, the details of our driving, and the conditions of our vehicle are turning into beacons of revenue that illuminate a new commercial prospect. According to the industry literature, these data can be used for dynamic real-time driver behavior modification triggering punishments (real-time rate hikes, financial penalties, curfews, engine lock-downs) or rewards (rate discounts, coupons, gold stars to redeem for future benefits).

Bloomberg Business Week notes that these automotive systems will give insurers a chance to boost revenue by selling customer driving data in the same way that Google profits by collecting information on those who use its search engine. The CEO of Allstate Insurance wants to be like Google. He says, “There are lots of people who are monetizing data today. You get on Google, and it seems like it’s free. It’s not free. You’re giving them information; they sell your information. Could we, should we, sell this information we get from people driving around to various people and capture some additional profit source…? It’s a long-term game.”

Who are these “various people” and what is this “long-term game”? The game is no longer about sending you a mail order catalogue or even about targeting online advertising. The game is selling access to the real-time flow of your daily life –your reality—in order to directly influence and modify your behavior for profit. This is the gateway to a new universe of monetization opportunities: restaurants who want to be your destination. Service vendors who want to fix your brake pads. Shops who will lure you like the fabled Sirens. The “various people” are anyone, and everyone who wants a piece of your behavior for profit. Small wonder, then, that Google recently announced that its maps will not only provide the route you search but will also suggest a destination.

You Are Being Measured

You probably don’t give it much thought, but you are constantly being measured. This occurs even when you are doing mundane things like driving your car and walking down the street. License plate numbers are being harvested en masse, alongside the faces in the cars that are attached to them. The data is typically stored and there is very little regulation currently on the books to govern who and how someone might access it. For more on this, check out the article “Algorithmic Regulation.”

The goal: change people’s actual behavior at scale

This is just one peephole, in one corner, of one industry, and the peepholes are multiplying like cockroaches. The Chief Data Scientist of a much-admired Silicon Valley company that develops applications to improve students’ learning once told me, “The goal of everything we do is to change people’s actual behavior at scale. When people use our app, we can capture their behaviors, identify good and bad behaviors, and develop ways to reward the good and punish the bad. We can test how actionable our cues are for them and how profitable for us”.

The very idea of a functional, effective, affordable product as a sufficient basis for economic exchange is dying. The sports apparel company Under Armour is reinventing its products as wearable technologies. The CEO wants to be like Google. He says, “If it all sounds eerily like those ads that, because of your browsing history, follow you around the Internet, that’s exactly the point–except Under Armour is tracking real behavior and the data is more specific… making people better athletes makes them need more of our gear.” The examples of this new logic are endless, from smart vodka bottles to Internet-enabled rectal thermometers and quite literally everything in between. A Goldman Sachs report calls it a “gold rush,” a race to “vast amounts of data.”

The assault on behavioral data

We’ve entered virgin territory here. The assault on behavioral data is so sweeping that it can no longer be circumscribed by the concept of privacy and its contests. This is a different kind of challenge now, one that threatens the existential and political canon of the modern liberal order defined by principles of self-determination that have been centuries, even millennia, in the making. I am thinking of matters that include, but are not limited to, the sanctity of the individual and the ideals of social equality; the development of identity, autonomy, and moral reasoning; the integrity of contract, the freedom that accrues to the making and fulfilling of promises; norms and rules of collective agreement; the functions of market democracy; the political integrity of societies; and the future of democratic sovereignty. In the fullness of time, we will look back on the establishment in Europe of the “Right to be Forgotten” and the EU’s more recent invalidation of the Safe Harbor doctrine as early milestones in a gradual reckoning with the true dimensions of this challenge.

There was a time when we laid responsibility for the assault on behavioral data at the door of the state and its security agencies. Later, we also blamed the cunning practices of a handful of banks, data brokers, and Internet companies. Some attribute the assault to an inevitable “age of big data,” as if it were possible to conceive of data born pure and blameless, data suspended in some celestial place where facts sublimate into truth.

Capitalism has been hijacked by surveillance

I’ve come to a different conclusion: The assault we face is driven in large measure by the exceptional appetites of a wholly new genus of capitalism, a systemic coherent new logic of accumulation that might be thought of as surveillance capitalism.

Capitalism has been hijacked by a lucrative surveillance project that subverts the “normal” evolutionary mechanisms associated with its historical success and corrupts the unity of supply and demand that has for centuries, however imperfectly, tethered capitalism to the genuine needs of its populations and societies, thus enabling the expansion of market democracy.

Surveillance capitalism is different; it’s a novel economic mutation bred from the clandestine coupling of the vast powers of the digital with the radical indifference and intrinsic narcissism of the financial capitalism and its neoliberal vision that have dominated commerce for at least three decades, especially in the Anglo economies. It is an unprecedented market form that roots and flourishes in lawless space. It was first discovered and consolidated at Google, then adopted by Facebook, and quickly diffused across the Internet. Cyberspace was its birthplace because, as Google/Alphabet Chairperson Eric Schmidt and his co-author, Jared Cohen, celebrate on the very first page of their book about the digital age, “the online world is not truly bound by terrestrial laws…it’s the world’s largest ungoverned space.”

While surveillance capitalism taps the invasive powers of the Internet as the source of capital formation and wealth creation, it is now, as I have suggested, poised to transform commercial practice across the real world too. An analogy is the rapid spread of mass production and administration throughout the industrialized world in the early twentieth century, but with one major caveat. Mass production was interdependent with its populations who were its consumers and employees. In contrast, surveillance capitalism preys on dependent populations who are neither its consumers nor its employees and are largely ignorant of its procedures.

Internet access is a fundamental human right

We once fled to the Internet as solace and solution, our needs for effective life thwarted by the distant and increasingly ruthless operations of late twentieth-century capitalism. In less than two decades after the Mosaic web browser was released to the public enabling easy access to the World Wide Web, a 2010 BBC poll found that 79% of people in 26 countries considered Internet access to be a fundamental human right. This is the Scylla and Charybdis of our plight. It is nearly impossible to imagine effective social participation ––from employment, to education, to healthcare–– without Internet access and know-how, even as these once flourishing networked spaces fall to a new and even more exploitative capitalist regime. It’s happened quickly and without our understanding or agreement. This is because the regime’s most poignant harms, now and later, have been difficult to grasp or theorize, blurred by extreme velocity and camouflaged by expensive and illegible machine operations, secretive corporate practices, masterful rhetorical misdirection, and purposeful cultural misappropriation.

Taming this new force depends upon careful naming. This symbiosis of naming and taming is vividly illustrated in the recent history of HIV research, and I offer it as analogy. For three decades scientists aimed to create a vaccine that followed the logic of earlier cures, training the immune system to produce neutralizing antibodies, but mounting data revealed unanticipated behaviors of the HIV virus that defy the patterns of other infectious diseases.

HIV research as analogy

The tide began to turn at the International AIDS Conference in 2012, when new strategies were presented that rely on a close understanding of the biology of rare HIV carriers whose blood produces natural antibodies. Research began to shift toward methods that reproduce this self-vaccinating response. A leading researcher announced, “We know the face of the enemy now, and so we have some real clues about how to approach the problem.” The point for us is that every successful vaccine begins with a close understanding of the enemy disease. We tend to rely on mental models, vocabularies, and tools distilled from past catastrophes ( i.e. the twentieth century’s totalitarian nightmares or the monopolistic predations of Gilded Age capitalism). But the vaccines we developed to fight those earlier threats are not sufficient or even appropriate for the novel challenges that we face today.

An evolutionary dead-end

Surveillance capitalism is not the only current modality of information capitalism, nor is it the only possible model for the future. To be sure, however, its fast track to capital accumulation and rapid institutionalization has made it the default model of information capitalism.

A cure depends upon many individual, social, and legal adaptations, but I am convinced that fighting the “enemy disease” cannot begin without a fresh grasp of the novel mechanisms that account for surveillance capitalism’s successful transformation of investment into capital. This has been one focus of my work in a new book, Master or Slave: The Fight for the Soul of Our Information Civilization, which will be published early next year. In the short space of this essay, I’d like to share some of my thoughts on this problem.

Fortune telling and selling

New economic logics and their commercial models are discovered by people in a time and place and then perfected through trial and error. Ford discovered and systematized mass production. General Motors institutionalized mass production as a new phase of capitalist development with the discovery and perfection of large-scale administration and professional management. In our time, Google is to surveillance capitalism what Ford and General Motors were to mass-production and managerial capitalism a century ago: discoverer, inventor, pioneer, role model, lead practitioner, and diffusion hub.

Specifically, Google is the mothership and ideal type of a new economic logic based on fortune telling and selling, an ancient and eternally lucrative craft that has exploited the human confrontation with uncertainty from the beginning of the human story. Paradoxically, the certainty of uncertainty is both an enduring source of anxiety and one of our most fruitful facts. It produced the universal need for social trust and cohesion, systems of social organization, familial bonding, and legitimate authority, the contract as formal recognition of reciprocal rights and obligations, and the theory and practice of what we call “free will.” When we eliminate uncertainty, we forfeit the human replenishment that attaches to the challenge of asserting predictability in the face of an always-unknown future in favor of the blankness of perpetual compliance with someone else’s plan.

Only incidentally related to advertising

Most people credit Google’s success to its advertising model. But the discoveries that led to Google’s rapid rise in revenue and market capitalization are only incidentally related to advertising. Google’s success derives from its ability to predict the future – specifically the future of behavior. Here is what I mean:

From the start, Google had collected data on users’ search-related behavior as a byproduct of query activity. Back then, these data logs were treated as waste, not even safely or methodically stored. Eventually, the young company came to understand that these logs could be used to teach and continuously improve its search engine.

The problem was this: Serving users with amazing search results “used up” all the value that users created when they inadvertently provided behavioral data. It’s a complete and self-contained process in which users are ends-in-themselves. All the value that users create is reinvested in the user experience in the form of improved search. In this cycle, there was nothing left over for Google to turn into capital. As long as the effectiveness of the search engine needed users’ behavioral data about as much as users needed search, charging a fee for service was too risky. Google was cool, but it wasn’t yet capitalism –– just one of many Internet startups that boasted “eyeballs” but no revenue.

Shift in the use of behavioral data

The year 2001 brought the dot.com bust and mounting investor pressures at Google. Back then advertisers selected the search term pages for their displays. Google decided to try and boost ad revenue by applying its already substantial analytical capabilities to the challenge of increasing an ad’s relevance to users –– and thus its value to advertisers. Operationally this meant that Google would finally repurpose its growing cache of behavioral data. Now the data would also be used to match ads with keywords, exploiting subtleties that only its access to behavioral data, combined with its analytical capabilities, could reveal.

It’s now clear that this shift in the use of behavioral data was an historic turning point. Behavioral data that were once discarded or ignored were rediscovered as what I call behavioral surplus. Google’s dramatic success in “matching” ads to pages revealed the transformational value of this behavioral surplus as a means of generating revenue and ultimately turning investment into capital. Behavioral surplus was the game-changing zero-cost asset that could be diverted from service improvement toward a genuine market exchange. Key to this formula, however, is the fact that this new market exchange was not an exchange with users but rather with other companies who understood how to make money from bets on users’ future behavior. In this new context, users were no longer an end-in-themselves. Instead, they became a means to profits in a new kind of marketplace in which users are neither buyers nor sellers nor products. Users are the source of free raw material that feeds a new kind of manufacturing process.

While these facts are known, their significance has not been fully appreciated or adequately theorized. What just happened was the discovery of a surprisingly profitable commercial equation –– a series of lawful relationships that were gradually institutionalized in the sui generis economic logic of surveillance capitalism. It’s like a newly sighted planet with its own physics of time and space, its sixty-seven hour days, emerald sky, inverted mountain ranges, and dry water.

A parasitic form of profit

The equation: First, the push for more users and more channels, services, devices, places, and spaces is imperative for access to an ever-expanding range of behavioral surplus. Users are the human nature-al resource that provides this free raw material. Second, the application of machine learning, artificial intelligence, and data science for continuous algorithmic improvement constitutes an immensely expensive, sophisticated, and exclusive twenty-first-century “means of production.” Third, the new manufacturing process converts behavioral surplus into prediction products designed to predict behavior now and soon. Fourth, these prediction products are sold into a new kind of meta-market that trades exclusively in future behavior. The better (more predictive) the product, the lower the risks for buyers, and the greater the volume of sales. Surveillance capitalism’s profits derive primarily, if not entirely, from such markets for future behavior.

While advertisers have been the dominant buyers in the early history of this new kind of marketplace, there is no substantive reason why such markets should be limited to this group. The already visible trend is that any actor with an interest in monetizing probabilistic information about our behavior and/or influencing future behavior can pay to play in a marketplace where the behavioral fortunes of individuals, groups, bodies, and things are told and sold. This is how in our own lifetimes we observe capitalism shifting under our gaze: once profits from products and services, then profits from speculation, and now profits from surveillance. This latest mutation may help explain why the explosion of the digital has failed, so far, to decisively impact economic growth, as so many of its capabilities are diverted into a fundamentally parasitic form of profit.

Unoriginal Sin

The significance of behavioral surplus was quickly camouflaged, both at Google and eventually throughout the Internet industry, with labels like “digital exhaust,” “digital breadcrumbs,” and so on. These euphemisms for behavioral surplus operate as ideological filters, in exactly the same way that the earliest maps of the North American continent labeled whole regions with terms like “heathens,” “infidels,” “idolaters,” “primitives,” “vassals,” or “rebels.” On the strength of those labels, native peoples, their places and claims, were erased from the invaders’ moral and legal equations, legitimating their acts of taking and breaking in the name of Church and Monarchy.

We are the native peoples now whose tacit claims to self-determination have vanished from the maps of our own behavior. They are erased in an astonishing and audacious act of dispossession by surveillance that claims its right to ignore every boundary in its thirst for knowledge of and influence over the most detailed nuances of our behavior. For those who wondered about the logical completion of the global processes of commodification, the answer is that they complete themselves in the dispossession of our intimate quotidian reality, now reborn as behavior to be monitored and modified, bought and sold.

The process that began in cyberspace mirrors the nineteenth-century capitalist expansions that preceded the age of imperialism. Back then, as Hannah Arendt described it in The Origins of Totalitarianism, “the so-called laws of capitalism were actually allowed to create realities” as they traveled to less developed regions where law did not follow. “The secret of the new happy fulfillment,” she wrote, “was precisely that economic laws no longer stood in the way of the greed of the owning classes.” There, “money could finally beget money,” without having to go “the long way of investment in production…”

“The original sin of simple robbery”

For Arendt, these foreign adventures of capital clarified an essential mechanism of capitalism. Marx had developed the idea of “primitive accumulation” as a big-bang theory –– Arendt called it “the original sin of simple robbery” –– in which the taking of lands and natural resources was the foundational event that enabled capital accumulation and the rise of the market system. The capitalist expansions of the 1860s and 1870s demonstrated, Arendt wrote, that this sort of original sin had to be repeated over and over, “lest the motor of capital accumulation suddenly die down.”

In his book The New Imperialism, geographer and social theorist David Harvey built on this insight with his notion of “accumulation by dispossession.” “What accumulation by dispossession does,” he writes, “is to release a set of assets…at very low (and in some instances zero) cost. Overaccumulated capital can seize hold of such assets and immediately turn them to profitable use…It can also reflect attempts by determined entrepreneurs…to ‘join the system’ and seek the benefits of capital accumulation.”

Breakthrough into “the system”

The process by which behavioral surplus led to the discovery of surveillance capitalism exemplifies this pattern. It is the foundational act of dispossession for a new logic of capitalism built on profits from surveillance that paved the way for Google to become a capitalist enterprise. Indeed, in 2002, Google’s first profitable year, founder Sergey Brin relished his breakthrough into “the system”, as he told Levy,

Honestly, when we were still in the dot-com boom days, I felt like a schmuck. I had an Internet start-up— so did everybody else. It was unprofitable, like everybody else’s, and how hard is that? But when we became profitable, I felt like we had built a real business.”

Brin was a capitalist all right, but it was a mutation of capitalism unlike anything the world had seen. Once we understand this equation, it becomes clear that demanding privacy from surveillance capitalists or lobbying for an end to commercial surveillance on the Internet is like asking Henry Ford to make each Model T by hand. It’s like asking a giraffe to shorten its neck or a cow to give up chewing. Such demands are existential threats that violate the basic mechanisms of the entity’s survival. How can we expect companies whose economic existence depends upon behavioral surplus to cease capturing behavioral data voluntarily? It’s like asking for suicide.

More behavioral surplus for Google

The imperatives of surveillance capitalism mean that there must always be more behavioral surplus for Google and others to turn into surveillance assets, master as prediction, sell into exclusive markets for future behavior, and transform into capital. At Google and its new holding company called Alphabet, for example, every operation and investment aims to increasing the harvest of behavioral surplus from people, bodies, things, processes, and places in both the virtual and the real world. This is how a sixty-seven hour day dawns and darkens in an emerald sky. Nothing short of a social revolt that revokes collective agreement to the practices associated with the dispossession of behavior will alter surveillance capitalism’s claim to manifest data destiny.

What is the new vaccine? We need to reimagine how to intervene in the specific mechanisms that produce surveillance profits and in so doing reassert the primacy of the liberal order in the twenty-first century capitalist project. In undertaking this challenge we must be mindful that contesting Google, or any other surveillance capitalist, on the grounds of monopoly is a 20th century solution to a 20th century problem that, while still vitally important, does not necessarily disrupt surveillance capitalism’s commercial equation. We need new interventions that interrupt, outlaw, or regulate 1) the initial capture of behavioral surplus, 2) the use of behavioral surplus as free raw material, 3) excessive and exclusive concentrations of the new means of production, 4) the manufacture of prediction products, 5) the sale of prediction products, 6) the use of prediction products for third-order operations of modification, influence, and control, and 5) the monetization of the results of these operations. This is necessary for society, for people, for the future, and it is also necessary to restore the healthy evolution of capitalism itself.

A coup from above

In the conventional narrative of the privacy threat, institutional secrecy has grown, and individual privacy rights have been eroded. But that framing is misleading, because privacy and secrecy are not opposites but rather moments in a sequence. Secrecy is an effect; privacy is the cause. Exercising one’s right to privacy produces choice, and one can choose to keep something secret or to share it. Privacy rights thus confer decision rights, but these decision rights are merely the lid on the Pandora’s Box of the liberal order. Inside the box, political and economic sovereignty meet and mingle with even deeper and subtler causes: the idea of the individual, the emergence of the self, the felt experience of free will.

Surveillance capitalism does not erode these decision rights –– along with their causes and their effects –– but rather it redistributes them. Instead of many people having some rights, these rights have been concentrated within the surveillance regime, opening up an entirely new dimension of social inequality. The full implications of this development have preoccupied me for many years now, and with each day my sense of danger intensifies. The space of this essay does not allow me to follow these facts to their conclusions, but I offer this thought in summary.

Surveillance capitalism reaches beyond the conventional institutional terrain of the private firm. It accumulates not only surveillance assets and capital, but also rights. This unilateral redistribution of rights sustains a privately administered compliance regime of rewards and punishments that is largely free from detection or sanction. It operates without meaningful mechanisms of consent either in the traditional form of “exit, voice, or loyalty” associated with markets or in the form of democratic oversight expressed in law and regulation.

A profoundly anti-democratic power

In result, surveillance capitalism conjures a profoundly anti-democratic power that qualifies as a coup from above: not a coup d’état, but rather a coup des gens, an overthrow of the people’s sovereignty. It challenges principles and practices of self-determination ––in psychic life and social relations, politics and governance –– for which humanity has suffered long and sacrificed much. For this reason alone, such principles should not be forfeit to the unilateral pursuit of a disfigured capitalism. Worse still would be their forfeit to our own ignorance, learned helplessness, inattention, inconvenience, habituation, or drift. This, I believe, is the ground on which our contests for the future will be fought.

Hannah Arendt once observed that indignation is the natural human response to that which degrades human dignity. Referring to her work on the origins of totalitarianism she wrote, “If I describe these conditions without permitting my indignation to interfere, then I have lifted this particular phenomenon out of its context in human society and have thereby robbed it of part of its nature, deprived it of one of its important inherent qualities.”

So it is for me and perhaps for you: The bare facts of surveillance capitalism necessarily arouse my indignation because they demean human dignity. The future of this narrative will depend upon the indignant scholars and journalists drawn to this frontier project, indignant elected officials and policymakers who understand that their authority originates in the foundational values of democratic communities, and indignant citizens who act in the knowledge that effectiveness without autonomy is not effective, dependency-induced compliance is no social contract, and freedom from uncertainty is no freedom.

Sources

Shoshana Zuboff, “The Secrets of Surveillance Capitalism.”

The NYPD has Tracked Citizens’ Cellphones 1,000 Times Since 2008 Without Warrants, by Ciara McCarthy, 2016 (originally published in The Guardian)

Does having your personal data harvested and stored and potentially sold to future employers concern you on any level?

Do you think it is possible to have a system of social organization like capitalism without the negative aspects asserting themselves in such a dominant way (i.e. exploitation, aggressive policing, total surveillance)?

How might you draw from both Goffman and Foucault’s theoretical frameworks to explain these contemporary developments?

Will surveillance capitalism become the dominant logic of capital accumulation in our time, or will it be succeeded by yet another mode of capitalist accumulation?

What is the solution? What might you as an individual begin to do differently with regard to limiting the exposure of your personal data?

When someone writes an piece of writing he/she retains the idea of a user in his/her brain that how a user

can be aware of it. Therefore that’s why this piece of writing is outstdanding.

Thanks!

The best part about capitalism is that there will always be a new way of accumulating wealth, and that way will most likely out date surveillance capitalism. We can either wait for someone to do it or try to do it ourselves! Will the new way involve our personal information? Will it involve companies’ transparency? These are questions we should have to answer soon or later.

Having my personal data gathered and accumulated is very concerning to me, I may be old fashioned, but surveillance capitalism is scary. I much prefer being the consumer and not the product. I also like to interact with people when they are real, in this world of “like” clicks people are friendly so that they will be rated higher.

Capitalism unchecked will always run out of control; government regulations are regularly needed to keep companies from running out of control. These companies that profit from collecting and selling personal data need to have regulations put in place, limiting what it may and may not collect. People also need to be educated on what companies are doing; most older people on Facebook freely provide personal data without any concern about putting their information out there.

Yes having my social media sold and stored does make me a little startled. With all of the new technology being created, I’m afraid that people can hack into things of mine. Also that we are always being monitored is weird because everyone needs privacy, and if we never are considered to have privacy then maybe things need to change. Honestly it feels like we are no longer safe without video surveillance to show proof of what really goes on in the world. People are starting to rely on it and are no longer going to do things to experience themselves.

My personal data being stored and given to future employers does bother me in different ways. My main issue with this is the fact that it is called personal information, but people have the option to look at it as they please. I think anything is possible, but with capitalism there is always a power hungry, suspicious leader that makes this type of thing impossible. I would think capitalism surveillance is the best for keeping an eye on your citizens and keep people safe in some respect, but there should be a point where the surveillance should be kept below. What I might do is lessen my time with electronics to lessen the chance of my personal data being thrown out in the public, but what the government can do is to strict their reach with things concerning people’s personal information.

Does having your personal data harvested and stored and potentially sold to future employers concern you on any level?

The answer to this question would be yes, it concerns me. There are many reasons as to why it does concern me as this personal data is supposed to be private and companies should not take this information without my knowing permission. The first reason as to why it concerns me is that companies will get a hand on certain information that could put them one step ahead of you in things such as markets, job opportunities, etc. They could basically blackmail you. Second, I believe that this information should be private and not sold. If anyone should sell it, it should be me.

Do you think it is possible to have a system of social organization like capitalism without the negative aspects asserting themselves in such a dominant way (i.e. exploitation, aggressive policing, total surveillance)?

The answer to this would be no. I believe this because without aggressive policing, people would be able to hide many things without repercussions.

Yes, having my personal data stored and sold bothers me. Social media has becoming increasingly popular throughout my generation. I was on social media ever since the sixth grade. Meaning, that my immature self had access to social media. That was long before i matured and began to think critically about my actions and the words i speak. I also believe that people can change. So if a company is going to look at someones social media account they should look to see how they have changed throughout the years.

This “panopticon” observes our everyday actions and profits from the information about us that they collect. Various social media sites and other websites in general are watching our every action and learning about us. It’s kind of creepy. However, this type of surveillance that keeps track of all internet activity has been useful in detecting and preventing criminal activity.

Over the years I have started using social media a lot more frequently. Although I do not post much on my accounts, I find it interesting to see what others have to say and hear their opinions on the most recent topics that the world is focused on. I believe that it would be safe to say that many people are “addicted” to social media and the drama that it causes but to me it is something that I would not mind giving up. A lot of people that use this technology do not understand how important some of the things that they post truly are. People believe that they are able to put all of their information on their account and it will be protected from the public. Unfortunately this is not the case. Nobody should ever post too much personal information about themselves, and this is something that I believe I have been pretty good at compared to others.

The “panopticon” as a modern-day theoretical watch tower watching all of us, actually isn’t that far off from what is actually happening to millions, possibly billions, of people who use the internet daily and absolutely just cannot seem to be able to take their eyes away from their phones for five minutes. We’re all guilty of this habit but it may be more dangerous than at first glance. This is giving governments and large corporations (like Google and Facebook) immeasurable amounts of power over individuals. They have so much data on a person that they probably know more about someone then their own friends. This isn’t necessarily a bad thing in a vacuum, but in reality it’s primary purpose is to make more and more money for the corporations and give more and more power to the superpower governments of the world, while simultaneously taking more and more individual power away from the individual. The watch tower panopticon theoretical model seems to have come quite true, possibly without the prison aspect in most cases, in the digital age where everyone and their brother has a smartphone in their pocket at all times with no shortage of datalogging “features” built right into them.

The idea of my personal data being stored and potentially being sold to future employers does concern me. I do watch what I post but employers do not need my personal data to decide whether or not I am qualified for the position or a promotion. I can understand employers wanting to look over your public profiles, but going through every single nook and cranny is not necessary. Unfortunately, this is the day we live in, we post everything on social media and then think about what we are saying or posting about later. I do not think it is possible to have a system like capitalism without the negative aspects being so prominent. People will exploit the smallest things against someone or something that they do not like for their own benefit. People are greedy and will do almost anything to get themselves ahead of competitors. There will always be a group of people who think their friend or family member were handled too aggressively by the police. I’m not saying there isn’t a problem with police using excessive force, but even in the smallest situations there will always be someone to disagree with what is going on. With people being so open on social media, total surveillance is inevitable. Some people post anything and everything on their Facebook account with where they are and what they are doing. Do I think total surveillance is right? Absolutely not, but it is here and we cannot continue to pretend like it’s not going on.

Having my information being sold to future employers does not really concern me that much because I feel that I do not post inappropriate things. However, I do feel that it is an invasion of privacy. There are many people who post inappropriate things on social media, and that would put them at great risk of losing job opportunities in the future. They view as a place to freely post about their life and do not understand that there could be possible negative effects in the future. Having my information be sold to possible future employers is a bit alarming to some degree because it makes me wonder what the next step will be. What will this escalate and lead to in the future regarding privacy?

I have personally given this topic a lot of thought in regards to what information I allow the internet to access. I am not at all concerned with my personally data being sold. Social media is “free” although nothing in life truly is, that’s the price you are paying for using these sites. If I wanted my data to be kept to myself I would not use social media. Capitalism and it’s affects have been going on long before social media had this platform. However, I think the negative effects have been amplified. Goffman addressed the control and corruption of hospitalization, especially in mental institutions. The patients conformed to the institutions. This is a similar pattern we are seeing in capitalism. Foucault say the negative effects to people not conform to societal norms which again we see in capitalism. I personally don’t see many solutions to “surveillance capitalism” even with privacy settings and your own censor it is still hard to control what your affiliates can put up about you. It seems as though we are constantly in the public eye at this point.

In my opinion, your personal data is YOUR personal data. I do not think that it should be able to be stored and “potentially” sold to future employers even though no information is safe nowadays. But with how technology is advancing today, almost every single application that you use stores your data, whether or not that platform shares the data they collect or not. When you download new apps onto your smartphone, you always get prompted on if you would like to share data with the app creator and whether or not you would like to share your location. Why would they need to know your location? If they have access to that, what else do they have access to? Another thing to think about is Facebook. Almost every single person in the world has either heard of Facebook or has a Facebook account to see what all their friends are doing. Facebook is constantly sharing information and you can basically find stuff out about people with a basic google search. Where they live, their phone number, who their family is, and where they work can all be found out from google. Yes, this concerns me, but I do not think that anyone can do anything about it anymore. Private data is constantly being shared with other people especially if you have every social media account under the sun including, myspace, twitter, Facebook, Instagram, Tumblr, and WhatsApp. The future is inevitable and is coming whether we like it or not.

I believe if my personal data is going to be stored than it should be in a trustworthy place. However, in today’s society, being on the internet makes us much more susceptible to having our information stolen then ever before. These websites should not be able to access your personal information without your permission. Even though it’s so dangerous, it’s easy to see why we give up our own privacy in order to maintain these social norms to keep up with the rest of the world we live in. When huge organizations like Facebook, Twitter and Instagram introduce a new social service, they usually are successful, due to their ever-growing popularity. These organizations sell our information without our knowledge and make a huge profit on it. I think this is concerning and is very invasive in many different ways. The only way something will change is if people become aware of what the companies are doing to them. Once people become more informed, they will realize how dangerous these companies really are.

To have my personal data stored and sold to companies does not primarily concern me mainly because I stay aware of how these things are retrieved and I protect myself. Employers are usually going to do a background check to begin with. Being careful about what you post on the internet as a starter considering its there forever whether its deleted or not. People need to be proactive about what they are doing on the internet today and how it can easily effect their future. Sharing something might just be the reason that somebody else gets a job over you someday.

When it comes to the concern of my personal data being hacked, and also collected by facebook, I really don’t find myself worried. Ever since social media, online banking, and just surfing the web in general; we have seen more reports of hacking and stolen identities. Now since all this personal information is all already out there, there is not much we can do. It doesn’t matter if you only sent one payment, that information is stored in a server among millions of others. If a hacker wants to access this information, and they know what they’re doing, then it is not hard at all. We have talked about it in some of my former IST classes, and it is actually incredible how easy it is to tap into someones internet and to steal information. I think three good safety precautions for anyone is to: encrypt their data, refrain from using public wifi as much as possible, and store sensitive data offline. Although I believe that if someone wants access to most of your information, they can retrieve it in 20 minutes, it really can’t hurt to take some precautions. Another thing addressed in this post was government surveillance. I saw someone mention that a good precaution is to turn off location services. The sad thing is, this only allows that specific app not to share your location. If you own a new smartphone, then it is almost certain it is equipped with an accelerometer. This is a chip that measures if you phone is horizontal or vertical. Just like your fingerprint, your phone and it’s signal is significantly unique on a micro-level. By analyzing the data the accelerometer data signals in detail, you can obtain all the data that is has ever collected, or your “fingerprint”. The scariest thing about this is that the accelerometer is not the only thing collecting data in your phone without prompting you.

Capitalism without the the negative aspects just doesn’t work. The government is so far deep into surveillance that it would be almost impossible for them to stop monitoring completely. Phone or social media surveillance has become the new norm. The only reason some people are in an uproar ie: younger people who don’t read terms and condition, is because the media is now blasting Facebook all over for personal information leaks. in 2018 we’re more concerned with that next like or that random survey of what kind of pizza would I be if my name is Kyle then understanding that all your locations, hometown and name is easily traceable and monitored by God knows who. What you out on and social media platform can be looked up by a future employer, you could have just had your first interview and you’re up against a fellow partner that has all the same qualities. They do a little background digging and come to find there are pictures blasted all over Facebook of you smoking weed and partying and using foul language when your counterpart doesn’t have Facebook but has Twitter and the only posts he has are of sports events, games and talk shows. They choose him over you. You have to be cautious about what you’re posting!!

In all honestly, I am very concerned. It is disturbing to think that my personal information is being sold for advertising. Is the only thing they care about money?? It makes me so angry that all of my information is saved, frankly, it is unfair. I use google every single day and it makes me uncomfortable knowing all of that information is saved, especially when the ads pop up on instagram and such. It’s jus weird. Especially when I think about something and know for sure I never searched it. Seems suspicious to me…Anyways, I for one do not have a Facebook, I have no use for it and think it is overrated, but that does not mean I am not at risk just as everyone else. It really bothers me that no one sees anything wrong about this, except those who are being monitored. This is a complete invasion of privacy but nothing is being done about it. It’s completely wrong! This really makes me think about the book 1984, because in all honestly it’s true. Big brother IS always watching. I am a part of 4 or 5 social media sites and all of my accounts are not private and seeing what has been happening really makes me second guess my choice. However, I have nothing to hide. I don’t think I am doing anything wrong, I don’t post on Twitter so my unpopular opinion isn’t out there. I’m just worried this could all be a part of something bigger, I know I’m just being paranoid, but you never know. I haven’t done anything to minimize what is being collected about me because what am I supposed to do? Stop using the internet? Delete all social media? For as sad as it is to say I could not delete it. Maybe Twitter, but not anything else. I’m worried for the future and what else this information can be used for. Technology keeps advancing so we don’t know what the future as in store for us.

I have been aware of the problems coming from privacy and data being harvested for quite some time now, which is why whenever I am ware of what information I keep on the web and what cookies i have enabled on what sites. Even though I have been aware, it is still concerning knowing that personal information can be stored on YOUR own clicks without being aware. I don’t think it possible to have anything without having some sort of negative aspect. If we were under total surveillance the idea of freedom of speech is already being attacked. Even though are some positives, the cons from the idea completely outweigh the pros. Goffman and Foucault both look at the same idea but from a different perspective. Goffman is supporting the idea of surveillance so there is a standard for the population and anyone who falls out of that standard can be marked as suspicious and people can be aware. Foucault believes that having standards leads to regression to everyone falling to the same monotonous standard and anyone who chooses to have a voice will be made to be out of the social standard making it harder for people to have an individual voice. It’s actually really difficult to tell which way our generation will swing when it comes to dominant logic. Some are aware, some aren’t, but with how easy it is to spread news and messages, it shouldn’t be difficult to make people aware. At this point, there really is no solution. Just be aware of the places you’re putting your information.

Reading about this whole dilemma of privacy and surveillance and advertising and etc. has actually already been something I’ve thought a lot about. With this article in particular, however, the whole idea that one’s information is never safe, that once it’s on the internet, it’s there for good, isn’t a brand new one. I find that this fear that people have with security settings never being enough to properly protect someone’s information, or living in the constant fear that our valuable information may get hacked, that identity theft is such a growing problem it seems like you would have ample reason to be paranoid no matter what you did on the internet. Just Google alone has hundreds and thousands and millions of people’s information, including social security and credit card numbers, and it would seem that one privatized company having that much power is extremely dangerous. I’m not saying it isn’t dangerous, but to be frank, I find that all of this fear is a result of people not having faith and trust in others. Yes, the world is a terrible place, where people wage wars on each other and people can get sued for even performing CPR to try and save someone’s life, but this idea that privacy is something that can always be cherished and valued is one that can’t be upheld anymore.

Sure, it may be simple enough to think that keeping your information offline may keep it safe, but you also forget other instances of information theft as well. Cameras and scanners are put in gas station machines, cash registers, ATMs, etc. to capture pictures and the numbers of credit cards are just one instance of this.

It doesn’t matter what you do, it doesn’t matter how much you hide yourself. Privacy is a thing of the past, especially with the society we live in. To give an example, a man named Eric Clanton violently attacked multiple people with a bike-lock during a riot in May of 2017. In video footage, he was cloaked from head to toe in all black, and even had the crowd to slink back into to further hide himself, even after hitting a man in the head so hard, he fractured his skull. It wasn’t even professionals that caught him, people from Reddit of all places tracked the man down, went through every single piece of footage possible of the event. They found his address, phone numbers, pictures, occupation, and then forwarded all the information to the police. He was arrested, and is now in prison for over 5 years on assault with a deadly weapon. A good telling of this event is done by a Youtuber found here: https://www.youtube.com/watch?v=muoR8Td44UE

But that instance doesn’t even bring into mind what actual professionals can do, not just random strangers on the internet. A company called Persistent Surveillance Systems has the ability to take pictures of entire cities using drones from miles above, and track down cars and people individually to track down crimes. For a more detailed story, I’d suggest listening to RadioLab’s podcast on this subject found here: http://www.radiolab.org/story/update-eye-sky/

So I get back to my point. This entire dilemma of privatized surveillance, or from capitalism from surveillance, much like Persistent Surveillance Systems, may seem scary, and it may seem like some horrid breach of privacy. But I’ll reiterate that in this modern day and age, with technology at our fingertips, with cameras on every person. Nothing can be kept secret anymore. To try and fight against this ideology is a fight completely and utterly in vain.

Having my personal data stolen without my consent and sold to anyone- employers, advertisers, government entities, ect. is extremely concerning. I have a very limited social media presence and I never put any personal data or even post at all on social sites, but I definitely look things up on google and use the internet daily. If my preferences or habits or personal information are being taken from those sites, its even more concerning than if it was just being harvested from things that I post because I clearly intended it to be private. Capitalism and the drive for government and large companies to know everything they can about their consumers or citizens certainly drives the data harvesting that we see Facebook being called out for now. While knowing their customer base can help profit-driven organizations to better sell their products and can make things more accessible for us consumers (when ads are catered directly to us, they have more relevance than randomly generated ones ones), the fact that data is an easily exploitable, minimally regulated commodity means that companies who have the ability to gather personal information and sell it to a third party to turn a profit have the legal right and financial imperative to do so, at least until privacy laws catch up with the current state of digital technology. Some people might be nostalgic for the days-gone-by when smartphones and computers weren’t such an integral part of daily life, but now that they are, the idea that the devices that we use to make our life easier or function-able are capable of being used to monitor us and that information sold to the highest bidder is nothing short of Orwellian.

While concerning, I don’t feel that having my information is a threat. If I were up to no good I might feel worried, but I have nothing to hide. And if I did, I wouldn’t be using the clear net… Companies just want to know what to make more of and what to start advertising. They want to know how they’ll profit the most. Good on them I guess. The thing that worries me a little is how easy it is to hack someone. Having your money and identity stolen from you is so easy. And hackers don’t get caught.

There are search engines that totally dismantle any usable data that a user inputs. While I don’t use one of these, I use a search engine that uses much of it’s profit to plant trees. At least I know the money they’re making from using me is going to a good place. I believe that in order to make a lot of money, companies have to be in your face. There are good companies out there that do good things with the money we make, but we don’t hear about them because they aren’t all over our screens and billboards.

Technology is still changing and so will the ways that companies gather our information. Cars are getting more advanced, they were tracking our GPS’s but now they can just track the car itself. Like the blog said, there are clothes with technology that can allow the company to track the customer. Snapchat keeps track of peoples’ snaps. iphone categorizes your pictures by tracking what’s in the picture. All kinds of crazy stuff.

The fact that all that I work for and all that I do successfully can be given and then positively harvested and grown from with another person is actually pretty interesting. But the fact that in a way that my success can be harvested in a negative way and ruin what I can grow to have is what concerns me. It is not the fact that someone is “taking” what I have, but rather the fact of it being ruined from another name. I do not believe that the society can have a social organization like capitalism work successfully without ANY corruption. The reason I believe that is because that I think corruption is everywhere. From any category, or any organization there is corruption somewhere. I think it has a lot to do with greed and the need for people to need more and more money. I think the surveillance system should and will be successful because of the constant need for it. Keeping everything valuable safe you need a heavy surveillance to do so. Obviously what I would do is like most others, stay off of social media. I do stay away from social media, but I might be on others and that is what I can steer away from. People do not need to know what you are doing at all times, and it can save a lot and prevent a lot from being stolen.

Honestly, I’m absolutely concerned. Every single thing we do is being monitored and captured in some kind of way. It is terrifying that all that we do is observed and no doubt deteriorating. I realized that all my stuff could be followed however I didn’t realize that it could be sold to present and future managers. From a young age my folks dependably instructed me to be watchful what I post since it can simply be followed regardless of on the off chance that you erase it. It’s startling to believe that one poor choice or post could destroy an opening for work. What’s more, who says anybody is a similar individual they were 10 years back when they settled on a poor choice or posted something they shouldn’t have? I don’t feel that obviously demonstrates somebody’s hard working attitude. I don’t have anything to cover up however it takes away my protection. I am not worried in the matter of what they will discover in light of the fact that I am not continually posting each seemingly insignificant detail about my life via web-based networking media. I need to possibly work for an administration office and I know for certain they will get all my data from the day I am conceived. This is something I am as of now mindful of and attempt my best to recollect forget that when I post via web-based networking media. I don’t accept there is an answer for this we may all simply need to live with it and watch what we post. Shockingly there might be individuals who have effectively demolished open doors for themselves.

I believe if my personal data is going to be harvested and stored that it should be in a safe place. I do not think it is right to be able to sell and give out peoples private information or private things. They should not be able to access or even save your personal things without your permission. I think that surveillance capitalism is starting to become a very big thing. It seems that you are being watched no matter what you are doing. I do not think that this should be the futue, I think this is concerning and is very invasive in many different ways. I agree with both Goffman and Foucaults theoretical frameworks. Although im not sure there is a correct solution. As an individual, i will start to be more careful with my personal business and what i allow to be out in the open and what i do not. I belive people can control their own doings and what is and is not posted. I do not believe that the surveillance will ever stop.

In today’s society, being involved in and on the internet is a social norm and it seems like we as people can’t function without it. That being said, it’s easy to see why we give up our own privacy in order to maintain these social norms to keep up with the rest of the world we live in. When mass corporations like Facebook, Twitter and even Netflix introduce a new social service, they tend to be successful due to their ever growing popularity and this popularity is what drives the capitalism machine. These corporations run the world and have it by their finger, allowing them to not only let us give up our own privacy but to also sell our privacy to other corporations for a profit. They are making money off other people’s lives simply by displaying their life by giving up there privacy and that kind of not right when you think about it. We are essentially selling our souls to fit in, giving up our own independence in the process.

I do have a level of concern for the privacy of my data, but I don’t have Facebook and I rarely post much about myself on other forms of social media. The latest Facebook controversy is a big reason why I don’t use it, but I also don’t feel the need to tell the world what I’m doing on a daily basis. I always try to be careful what personal information that I give out, and I never give out more info than I need to. All this also leads me to believe that I should be of no concern to future employers if they decide to look in to my social media habits. I always try to be as safe as possible online and safe with the technology I use, so I will probably never end up buying a product like Alexa or Google Home, besides the fact that I don’t see a good use for them. I firmly believe we should all have the right to a level of privacy with the personal data that we post online, however, we should also be careful what we post, because it seems nothing is safe these days no matter what measures are taken, which is a scary fact to face.

The increase in data harvesting by big companies diffidently has me concerned because it is a huge rights violation and these company’s seem to also be getting away with it. I honestly don think any social organization can have negative aspects, that is primarily because of human nature somebody is bound to be out for themselves and willing to screw it up. Based on todays security infringements by large company’s theorists Goffman and faucult were correct in the idea knowing is power because it is Definitely seen in company’s like google, Walmart, Facebook and so on. I believe surveillance capitalism will take over regardless if we want it to just because its gotten this out of hand already wants to stop it from going any further. The big thing you can do is push the fact that these are crimes and there should be a investigation in all these company’s maybe that would stop this

Having my personal data sold days pose a concern for me. I wonder what exactly they could see and think if it invades my own personal life. In theory it may sound beneficial thinking that, well if I need a job any future employer would know all about me. I do not think it is possible to have a social organization because people in power tend to abuse it after being exposed to it for so long, eventually they’ll get comfortable in their position and eventually start bending rules to fit their needs. As you had mentioned previously in the web post,” Foucault proved how each process of modernization has resulted in disturbing effects with regard to the power of the individual and the control of government”. It will only be a matter of time until there is total anarchy against the government for their reign of abuse and injustice.

Over the years society has changed greatly, especially when taking the internet into account. People’s data is often “harvested” and sometimes exploited to other business or entities. Companies like Facebook or Movie Pass, do this such thing. They often store other people’s personal data who utilize their products and in return these companies are using them as a way to benefit themselves. However, in my opinion, these kinds of methods used by companies actually does not concern me on any level. I say this because, I don’t have any secret personal data that can be used by companies. On top of that, I actually have Movie Pass myself and I specifically sign a contract knowing that this company does these kind of things as a means to make a profit. Many people would ask, why I would want to expose my personal information willingly? The main reason is the benefit of having a Movie Pass card itself. Being able to watch “one movie a day for only $9.95 a month”. Therefore, in my opinion taking such a risk is definitely worth it in the long run.